Definitive Guide to Agentic Frameworks in 2026: Langgraph, CrewAI, AG2, OpenAI and more

This post is written as of Feb 26th 2026 and we will update it as things progress.

Everyone's building agents right now. If you've been anywhere near the AI engineering space in the past year, you've watched the number of "agent frameworks" explode from a handful to... a lot. And honestly, picking the right one has become its own kind of problem.

We started working on Agentic AI in production in 2025, and yet, we saw that there are so many features to develop with. Some of them are genuinely great. Some are more in the "interesting, but not that interesting" category.

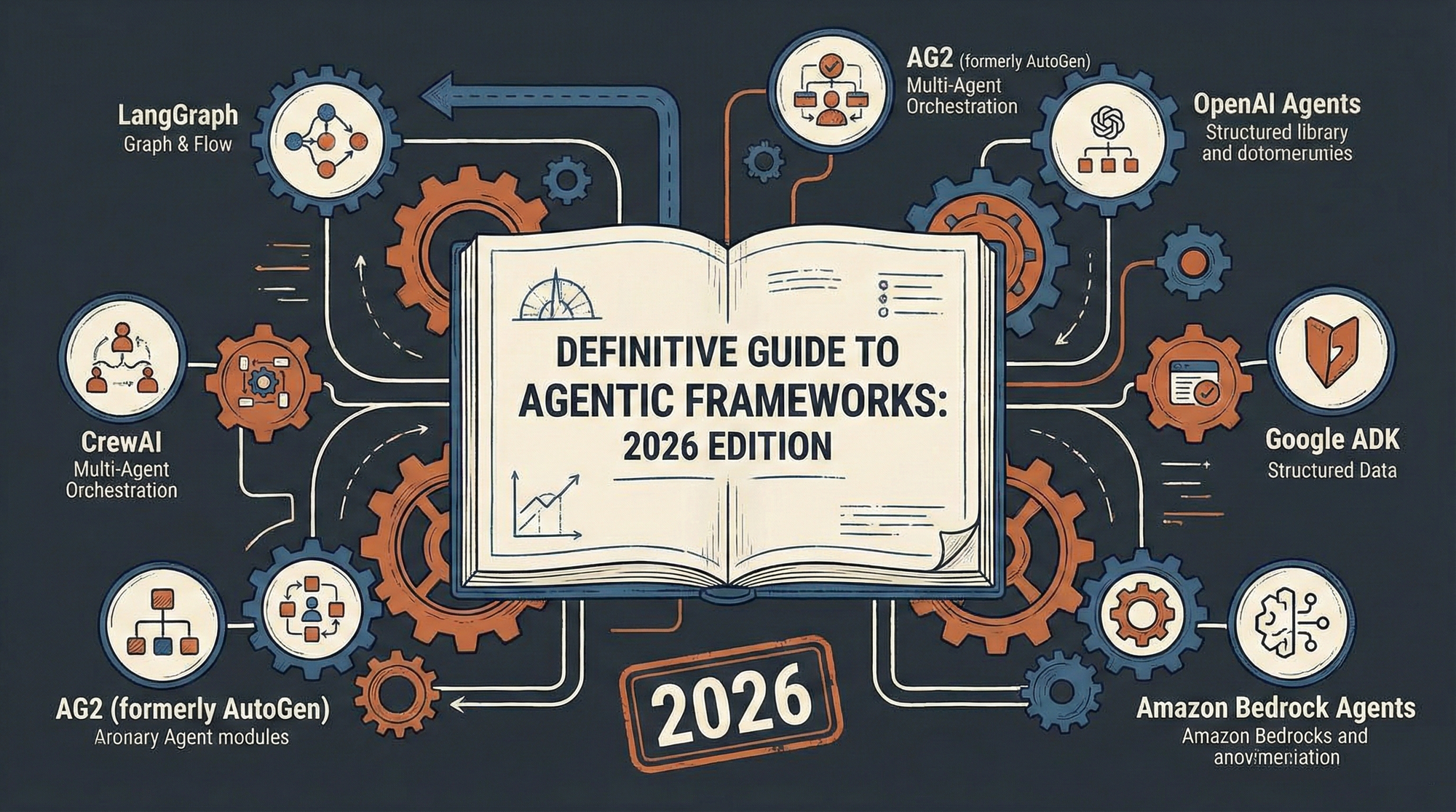

This post is our honest take on seven of the most popular agentic development frameworks in 2026: LangGraph, CrewAI, AG2 (formerly AutoGen), OpenAI Agents SDK, Pydantic AI, Google ADK, and Amazon Bedrock Agents. We'll cover what each one actually does, where it shines, where it falls short, what it costs, and whether you should care about it for your specific situation.

TL'DR

Here's everything at a glance. Scroll right on mobile to see all frameworks. More details after this table.

| Aspect | LangGraph | CrewAI | AG2 | OpenAI SDK | Pydantic AI | Google ADK | Bedrock Agents |

|---|---|---|---|---|---|---|---|

| GitHub Stars | 25k | 44.6k | 4.2k | 19.1k | 15.1k | 18k | N/A (Managed) |

| Open Source | Yes (MIT) | Yes + Commercial | Yes (Apache 2.0) | Yes (MIT) | Yes (MIT) | Yes (Apache 2.0) | No (Managed) |

| Languages | Python, JS/TS | Python | Python | Python, JS/TS | Python | Python, TS, Go, Java | Python, JS, Java, .NET |

| Model Agnostic | Yes | Yes | Yes | Partial (OpenAI-first) | Yes (25+ providers) | Yes (Gemini-first) | Yes (Bedrock FMs) |

| Ease of Use | Moderate-Hard | Easy | Moderate | Easy | Moderate | Moderate | Easy-Moderate |

| Best For | Production stateful workflows | Rapid multi-agent dev | Research & experimentation | OpenAI ecosystem agents | Type-safe production agents | Google/multi-lang teams | AWS enterprise deploys |

| Orchestration | Graph-based state machines | Sequential/Hierarchical | Conversation-based | Agent handoffs | Agent tools + graphs | Workflow + LLM routing | FM auto-orchestration |

| Observability | LangSmith (excellent) | Built-in + OTel | Basic (self-managed) | Built-in tracing | Logfire (OTel) | Built-in evals + GCP | CloudWatch/CloudTrail |

| Enterprise Security | SSO, RBAC, self-hosted | SSO, RBAC, VPC, SOC2 | None built-in | OpenAI platform | Type-safe + self-managed infra | GCP + Vertex AI | IAM, VPC, HIPAA |

| Framework Cost | Free (LangSmith paid) | Free to $25+/mo | Free | Free (API costs) | Free (Logfire paid) | Free (GCP costs) | Pay-per-use |

| Production Ready | High | High | Low | High | High | High | High |

| Experimentation | Medium | High | High | High | Medium | Medium | Low |

1. LangGraph

LangGraph is the low-level workhorse of the LangChain ecosystem. If you've used LangChain before, think of LangGraph as the layer underneath — it's where you define agent workflows as actual directed graphs with nodes, edges, and explicit state transitions. It's got about 25k GitHub stars and it's used by companies like Klarna and Replit, so it's not just a toy.

Philosophy: The philosophy here is very deliberate: LangGraph does not try to abstract away your architecture decisions. You decide how state flows, when to branch, when to loop, when to hand off to a human. It's inspired by things like Google's Pregel and Apache Beam, which tells you a lot about the kind of developer it's aimed at. If "graph-based state machine" sounds exciting to you, this is your framework. If it sounds exhausting, keep reading.

Good: Where it really shines is production. Durable execution (your agent can crash and resume), human-in-the-loop workflows, long-running stateful processes — LangGraph handles all of this well. Pair it with LangSmith fZor observability and you get one of the best debugging experiences available for agent systems. LangSmith traces are genuinely useful, not just pretty dashboards.

Bad: The downside? It's verbose. Building anything in LangGraph takes more code than most alternatives, and the learning curve is real. If you just want to prototype a multi-agent system over a weekend, you'll probably get frustrated. The graph abstraction is powerful but it forces you to think about things that higher-level frameworks hide from you — which is either a feature or a bug depending on your needs.

Costs: Cost-wise, LangGraph itself is MIT-licensed and free. But the full production story involves LangSmith (free tier gets you 5k traces/month, Plus is $39/seat/month) and the LangChain Agent Server for deployment. The enterprise tier with self-hosting and SSO is custom-priced.

Language supported: It supports Python and JavaScript/TypeScript. Security on the enterprise plan includes self-hosted deployment, SSO, and RBAC — your data doesn't have to leave your VPC.

2. CrewAI

CrewAI is kind of the polar opposite of LangGraph. Where LangGraph gives you a box of Legos and says "build whatever you want," CrewAI gives you a pre-assembled robot and says "just tell it what to do." It has over 44,000 GitHub stars — the most of any framework on this list — and there's a reason for that: it's genuinely easy to get started with.

Philosophy: The core idea is that you define a "crew" of agents, each with a role, and give them tasks to work on together. You can run them sequentially, hierarchically, or in hybrid patterns. There's a visual editor (Studio) where you can drag and drop workflows without writing code, which is a big deal if you have non-technical stakeholders who want to be involved in agent design.

Good: For rapid prototyping and getting something deployed fast, CrewAI is probably the best option out there right now. The enterprise platform (CrewAI AMP) adds triggers for Gmail, Slack, Salesforce, plus deployment management and RBAC. If you need to go from "we should build an agent for this" to "it's running in production" in a week, CrewAI makes that realistic.

Bad: The trade-off is control. When things go wrong — and with agents, things will go wrong — the high-level abstractions can make it harder to figure out what happened and why. You're somewhat at the mercy of how CrewAI decides to orchestrate things under the hood. For simple workflows this is fine. For complex, mission-critical systems where you need to reason about every decision the agent makes, it can feel like a black box.

Costs: Pricing: the open-source framework is free. The hosted platform starts at free (50 executions/month), then $25/month for Professional (100 executions), and custom Enterprise pricing that goes up to 30,000 executions with self-hosted K8s/VPC deployment. Enterprise includes SOC2, SSO, and PII masking.

Language supported: Python only. The tracking story is solid — built-in tracing, OpenTelemetry, hallucination scores, and human-in-the-loop guardrails.

3. AG2 (formerly AutoGen)

AG2 has a complicated backstory. It started as Microsoft's AutoGen — one of the earliest and most influential multi-agent frameworks — and then got spun out as an independent open-source project. It now calls itself "The Open-Source AgentOS." The ag2ai/ag2 repo has about 4,200 stars, though the original Microsoft AutoGen repo has way more.

Philosophy: The core concept is "conversable agents" — agents that talk to each other in structured conversations. You set up group chats, swarms, or one-on-one exchanges and let agents debate, collaborate, and solve problems through dialogue. It's a genuinely interesting paradigm, and for research and experimentation, it's one of the more creative frameworks available.

Bad: Here's where we have to be blunt, though: AG2 is not production-ready for most enterprise use cases. There's no first-party observability platform — you're on your own for logging and tracing. There are no built-in enterprise security features whatsoever. The code execution capabilities need careful sandboxing that you have to set up yourself. The transition from AutoGen to AG2 also fragmented the ecosystem, so documentation and community resources can be hit-or-miss.

Good: If you're doing academic research, prototyping conversational agent architectures, or just want to experiment with multi-agent dynamics, AG2 is great for that. It's completely free and open-source with no paid tiers. But if someone tells you to deploy it in production for a customer-facing application, we'd push back hard on that recommendation.

Costs & Language supported: Python only. No commercial platform, no paid support, purely community-driven.

4. OpenAI Agents SDK

OpenAI released their Agents SDK in March 2025, and it's already at 19k+ GitHub stars. It's the successor to their experimental Swarm SDK, and it shows — the design is clean, opinionated, and clearly built by people who've watched developers struggle with agent orchestration.

Philosophy: The SDK gives you five primitives: Agents, Handoffs, Guardrails, Sessions, and Tracing. That's basically it. You define agents with instructions and tools, wire them together with handoffs, add guardrails for safety, and get tracing for free. A multi-agent triage system can be built in about 30 lines of Python. It's refreshingly simple.

Good: What makes this especially compelling is how it pairs with OpenAI's Responses API and built-in tools. Web search, file search, and computer use are all available as first-class tools — no third-party integrations needed. If you're already using OpenAI models, the developer experience here is hard to beat. Companies like Coinbase and Box have used it to prototype and deploy agents in days, not weeks.

Bad: The obvious limitation is vendor lock-in. Yes, the SDK technically works with other providers via Chat Completions-compatible endpoints, but let's be real — the tightest integration and best experience is with OpenAI models. If you care about model flexibility, this isn't ideal. Also, if you need complex stateful workflows with durable execution, LangGraph is still a better fit. The Agents SDK is more about quick, practical agent systems than deeply complex orchestration.

Costs & Language supported: The SDK is free and open-source. You pay standard OpenAI API rates for models, plus tool-specific costs: web search runs $25-30 per 1k queries, file search $2.50/1k queries, computer use $3/1M input tokens. Tracing is built in at no extra cost. Python and JavaScript/TypeScript.

5. Pydantic AI

This one has a special place in our hearts. Pydantic AI is built by the same team behind Pydantic — the validation library that literally powers the internals of the OpenAI SDK, the Anthropic SDK, LangChain, CrewAI, and basically every other AI framework in Python. Their tagline is essentially "why use the derivative when you can go straight to the source?" and honestly, it's a fair point.

Philosophy: The design philosophy is all about type safety and developer ergonomics. If you've used FastAPI and loved the experience of typed endpoints, validated inputs, and great IDE autocompletion — that's exactly what Pydantic AI feels like for agents. Every agent has typed dependencies, typed outputs, and validated tool calls. Errors get caught at write-time instead of blowing up in production. It's the closest thing to "if it compiles, it works" that exists in the Python agent world.

Good: The model-agnostic story is genuinely impressive. Over 25 providers are supported: OpenAI, Anthropic, Gemini, DeepSeek, Grok, Cohere, Mistral — plus platforms like Azure AI Foundry, Amazon Bedrock, Vertex AI, Ollama, and many more. If your provider isn't listed, you can implement a custom model. They also support MCP (Model Context Protocol) and A2A (Agent2Agent) for interoperability, plus durable execution for long-running workflows.

Observability is handled through Pydantic Logfire, which is OpenTelemetry-based — meaning it works with any OTel-compatible platform you already have. The built-in eval support for systematic testing is a nice touch that most frameworks still lack.

Bad: The downside? It's firmly code-first. No visual editor, no drag-and-drop. And the type-heavy approach, while powerful, can feel like overkill if you just want to hack together a quick prototype. If your team isn't comfortable with Python type hints and dependency injection patterns, there's a real learning curve. But for teams that value code quality and are building systems where "the agent returned the wrong type" could be a serious problem — financial services, healthcare, legal — this is probably the most robust choice.

Costs & Language supported: Free and MIT-licensed. Logfire has separate pricing. 15k+ GitHub stars and growing fast. Python only.

6. Google Agent Development Kit (ADK)

Google's ADK is interesting because it's the only framework on this list with serious multi-language support. Python, TypeScript, Go, and Java. If you're running a team with JVM or Go services and you don't want to spin up a Python microservice just for your agents, ADK is basically your only option among the major frameworks. It's got about 18k GitHub stars.

Philosophy: The design philosophy is "make agent development feel like software development" — modular components, clear separation of concerns, familiar programming paradigms. You get workflow agents (Sequential, Parallel, Loop) for predictable pipelines, and LLM-driven dynamic routing when you need agents to make their own decisions. It's optimized for Gemini and Google Cloud but explicitly model-agnostic and deployment-agnostic.

Good: For Google Cloud shops, the deployment story is strong. Vertex AI Agent Engine and Cloud Run give you production-ready infrastructure without too much fuss. The built-in evaluation framework is also worth mentioning — it lets you test both final response quality and step-by-step execution against predefined test cases, which is something a lot of teams struggle to set up from scratch.

Bad: The honest downside is maturity. ADK is newer than LangGraph or CrewAI, and the community + third-party ecosystem reflects that. Each language SDK may also be at a different maturity level — the Python SDK is the most polished, while the Go and Java SDKs are catching up. If you need a rich library of pre-built tools and integrations, LangChain's ecosystem is still larger.

Costs & Language supported: Open-source and free. You pay for Google Cloud infrastructure and Gemini API calls (or whatever model provider you choose).

7. Amazon Bedrock Agents

Bedrock Agents is the odd one out on this list — it's not an open-source framework at all. It's a fully managed AWS service. You don't write orchestration code; you select a foundation model, write natural language instructions, and the service figures out how to break down tasks, call APIs, and manage memory. AWS recently added AgentCore on top of this for deploying agents from any framework at scale.

Good: For enterprise teams already deep in the AWS ecosystem, this is genuinely appealing. The integration with Lambda, S3, DynamoDB, and Knowledge Bases means you can wire agents into existing infrastructure without building a bunch of glue code. Multi-agent collaboration with supervisor agents is built in. And the security story is probably the strongest on this list — IAM, VPC, encryption everywhere, Bedrock Guardrails for content filtering and PII detection, plus SOC, ISO, and HIPAA compliance through AWS infrastructure.

Bad: The trade-off is flexibility. You're trading control for convenience, full stop. Want to experiment with a novel orchestration pattern? Not really possible here. Want to switch to a non-Bedrock model? That's going to be painful. Need to iterate quickly on agent architecture? The console-driven workflow is slower than writing code. Teams new to AWS will also face a steep learning curve around action groups, Lambda integration, and knowledge base setup.

Costs & Language supported: No upfront costs — pay-per-use based on foundation model tokens plus feature-specific charges for knowledge bases and guardrails. Language-agnostic for agent definition (you use the console or natural language), with SDK access via Python (Boto3), JavaScript, Java, .NET and others for programmatic control.

So Which One Should You Actually Pick?

Look, there's no universal answer here — anyone who tells you otherwise is selling something. But after working with most of these, here's how we'd think about the decision:

If you're building complex, stateful production workflows and your team has strong engineering fundamentals, go with LangGraph. It's the most mature, and LangSmith's observability is genuinely best-in-class. You'll write more code, but you'll understand every line of it.

If you need to ship fast and want the lowest barrier to entry, CrewAI is hard to beat. The visual editor alone makes it worth evaluating, and the community is massive. Just know that you're trading some control for speed.

If you're doing research or exploring multi-agent conversation patterns, AG2 is the playground for that. Just don't bring it to a production deployment conversation.

If you're all-in on OpenAI and want the smoothest possible developer experience, the OpenAI Agents SDK is the obvious choice. The built-in tools alone — web search, file search, computer use — save you a ton of integration work. Just accept the vendor dependency.

If your team cares deeply about code quality and type safety, Pydantic AI is the most thoughtfully designed framework on this list. It's what we reach for when the output format really matters and "the agent returned garbage" isn't an acceptable failure mode.

If you're a multi-language team or Google Cloud native, Google ADK is your only real option for production-quality Go/Java/TS agent development. The Vertex AI integration is a nice bonus.

If you're an AWS enterprise that wants someone else to handle the infrastructure, Amazon Bedrock Agents is the managed path. Best security story, least flexibility. That's the deal.

One last thing worth noting: these frameworks are all converging. MCP for tool interoperability, A2A for agent-to-agent communication, OpenTelemetry for observability — these standards are reducing switching costs across the board. The framework you pick today doesn't have to be the framework you're stuck with forever. So bias toward whatever lets your team move fastest right now, and trust that migration will get easier over time.

This comparison reflects our experience and research as of February 2026. Things move fast in this space — check the latest docs before making a final call.